A Trip Through Dependency Hell

Recently I've had to wrestle a few web apps trapped in dependency hell and figured it would be worthwhile reviewing its various forms. Specific items here may be better known by other names, but everything here involves dependencies in one way or another. If lucky this post might help others get the jump on looming dependency issues before they fester into the various manifestations of hell.

Too Many Dependencies

Having a large number of direct dependencies (even development specific dependencies) translates to more maintenance work. The number which constitutes "too many" typically scales to how tightly coupled the project is with a dependency, as depending on how the dependency is maintained (if at all, more on that later) major version bumps may necessitate a substantial amount of work (also discussed later).

The primary problem with having a large number of direct dependencies (and indirect dependencies in some scenarios) is that it has a nasty tendency to act as a multiplier for the other issues that pave dependency hell. Have a read of Todd Ginsberg's The Art of Picking Dependencies post for some guidance on how to avoid making this problem.

Conflicts Between Transitive Dependencies

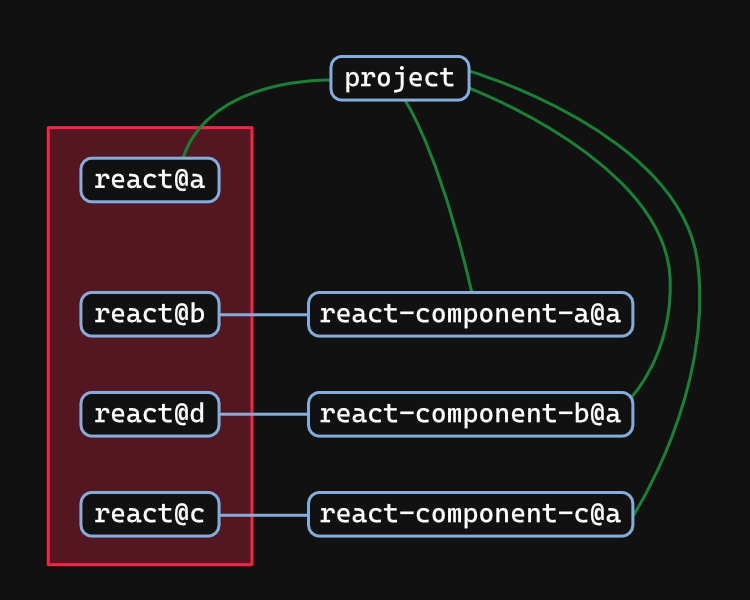

Transitive dependencies in the JavaScript ecosystem (in its current state) have a tendency where conflicts in transitive dependencies go unnoticed. This is due to an amazing and terrible feature of npm where multiple versions of a dependency can be installed (including directly if dependency aliases are used).

In React, transitive dependency conflicts are quite common when using component libraries. Often the only hint that something isn't right will be obscure issues which are remarkably difficult to debug. Package authors may attempt avoid this issue by declaring dependencies such like React as a peer dependency and excluding it from their standard dependencies list, effectively shifting the responsibility of dependent package inclusion up the dependency chain (a strategy commonly employed by ESLint plugins). The approach is not perfect (creates more work, confusing to those new to the ecosystem) but for the moment is better than nothing, just keep in mind that most packages don't put the effort in to resolve this issue.

Transitive dependency issues will hopefully be common in NPM v7 as the way peer dependencies are handled is changing, however this entirely depends on the ecosystem properly using them. Give it a few years and things should improve, old packages (and by extension old projects which use them) however are unlikely to benefit for obvious reasons (old package versions becoming mutable would be a terrifying prospect).

Best way to get a handle on this? Look for multiple versions of the same dependency being used. Let me know if you find a better strategy, in the meantime I'll be brainstorming for a massive possible solution.

The Update Trap

Consider the following characteristics a dependency update may include;

- Major revision to the API surface and concepts

- Short "grace period" (time before focus shifts to the next update and its update process), if one is provided at all

- Major dependency updates which the consumer needs to update to as well (common with frameworks)

Now suppose you have many updates pending, big and small, that feature constraints like above. Some characteristics may even intersect (e.g. 2 or more libraries needing other libraries to first be updated, which in turn have their own library updates that need to first be performed). As evermore updates pile the amount of work required quickly increases, growing at an exponential rate if you are unlucky (or have circa-2020 JS project).

You haven't managed software until you've hit this dilemma:

- Release depends on x feature

- x feature depends on y tech upgrade

- y tech upgrade will break everythingWhat is the name for this and when will it stop?

— Christian Findlay (@CFDevelop) August 20, 2020

Welcome to the update trap! While possible to escape, the planning and research required are often overwhelming. The knee jerk reaction to this is often to jump straight to the latest versions (falling for the trap), a mistake that will typically adversely impact a teams (or your own) ability to push out fixes and functionality until the upgrade is complete (the alternative is dealing with merge conflicts, an extremely undesirable outcome as it means more time in development and a harder time justifying completion of the upgrade, if appropriate to your exact scenario).

Another temptation here will be to rebuild the project with the updates dependencies baked in. While this can be a valid strategy, it is not to be taken lightly. A good understanding of what is required and a comparision against the alternative options is a must, as there is a significant (if potentially more stable) cost here.

Escaping this mess will require lots of notes and an iterative update approach. Just be mindful of the other component of this trap, the act of updating the existing project may prove to be more time consuming then rebuilding the app (in extreme scenarios).

Of course, prevention is better than the cure. Keeping up to date will mean less stress (from navigating update messes), less time lost overall (massive update efforts can take considerable planning time), and it avoids the update trap entirely for escapes are not always successful.

Implicit Dependency on Tooling or Runtime

Perhaps you've heard of the term "bashism", an informal term referring to a tendency for developers to assume the shell used is Bash resulting in errors when a script with bashisms is used in a different shell.

I often see the same sort of problem in the JS ecosystem.

- Most but not all packages are created for NodeJS, and may only work there.

- Browser targeted packages may only work in browsers.

- Some React component libraries only function when processed via webpack (ES module spec violations are a common cause of this), and with certain configurations at that.

- Packages declaring themselves as containing ES Module code, but not conforming to standards (and at worst this source is entirely untested).

- Packages having undeclared dependencies on certain APIs (e.g. requiring the DOM when the packages scope doesn't indicate any need for it, always fun having to patch it in with JSDOM all the while hoping code somewhere else isn't doing the same thing).

Its a particularly frustrating issue, and one which only has a hope of being resolved if multi-runtime testing becomes standard practise within the community. For the moment this is not an easy problem to address, I do hope to change that in the future with the theory test framework project.

Unmaintained Dependencies

An unmaintained dependency is generally problematic at the best of times. If unlucky, it may block you from performing updates. Best way to handle this?

- Make sure dependencies are maintained before depending on them (and not just charging forward which may complicate your own maintenance efforts).

- Look to The Art of Picking Dependencies.

- If possible, offer (and supply) support for maintenance. This could be your own time, a donation, or something else entirely (typically creators don't want their projects to wither so they will seek alternative maintenance strategies).

Rapid Evolution

Projects that are willing to innovate are great, but they can be problematic if the rate of change outstrips your capacity to keep up to date (which often takes longer for more bold projects). Weigh the risk vs. reward proposition for your project before betting on a project that is experiencing rapid evolution. If you are fond of a particular incarnation someone may have forked the project to keep it maintained as is (or perhaps you may fork it yourself).

If I have one piece of advice on this, its don't overextend yourself. Too many rapidly evolving projects are untenable.

Undeclared Dependencies

This can manifest in 2 ways (but are not mutually exclusive);

- The project uses dependencies which are brought in indirectly via other dependencies.

- The same as above, except its a dependency in doing this instead.

Using a dependency manager that isolates each package to only their declared dependencies (e.g. pnpm) is useful for detecting these issues (pnpm also provides hooks to manually correct these issues). No doubt there are linters out there are able to automatically detect these issues (important for languages like PHP where the package manager is unable to isolate packages).

Ecosystem Issues

There are a few ecosystem level issues which at times help a dependency hell instance to form.

Update Chains

The deeper a particular dependency is along a dependency chain, the longer it will generally take updates to propagate into individual dependency graphs (particularly for major versions). If this dependency is frequently used, then odds are it will take even longer for the update to propagate to all dependency chains in a given dependency graph (perhaps it never will in some cases).

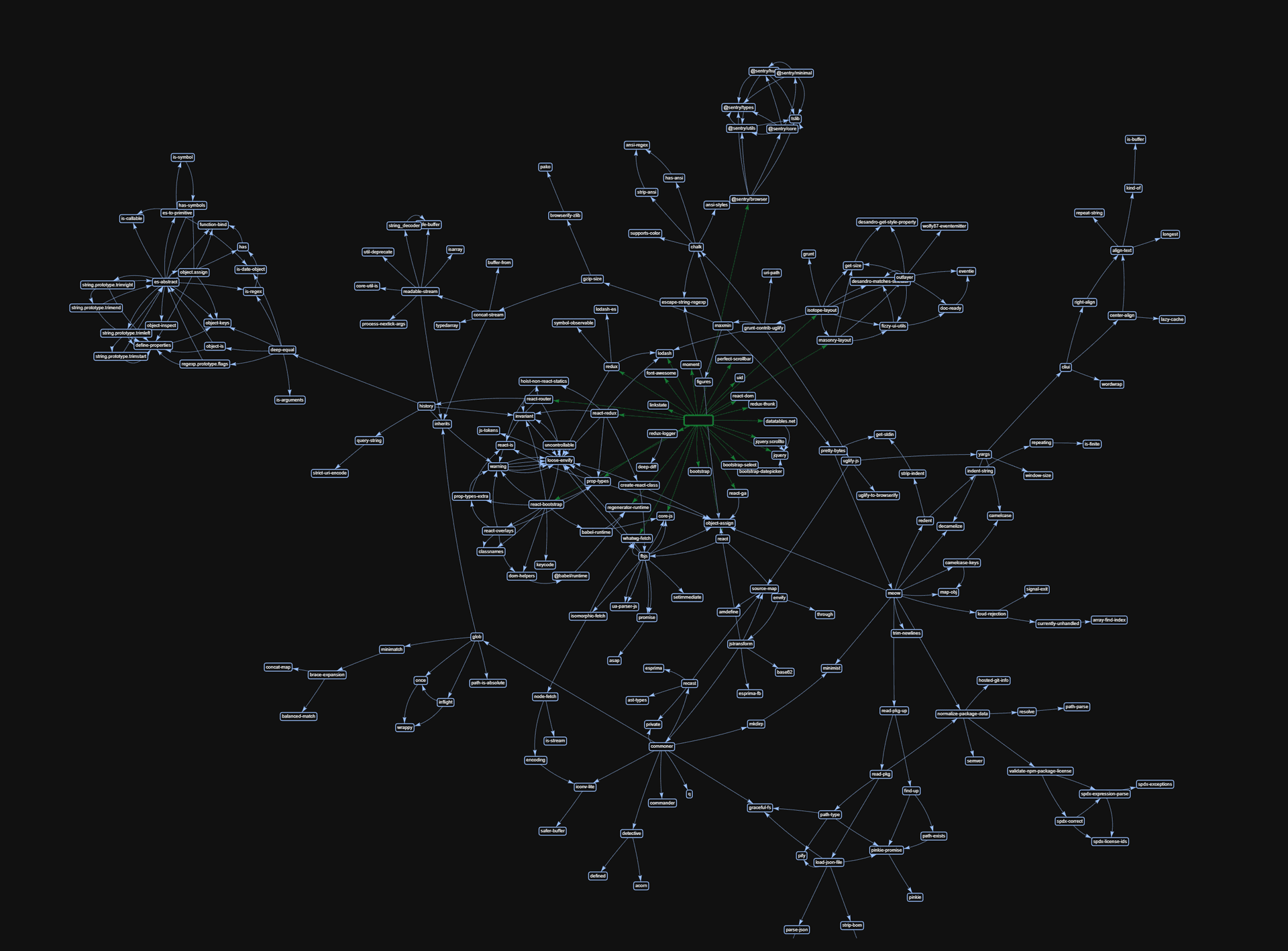

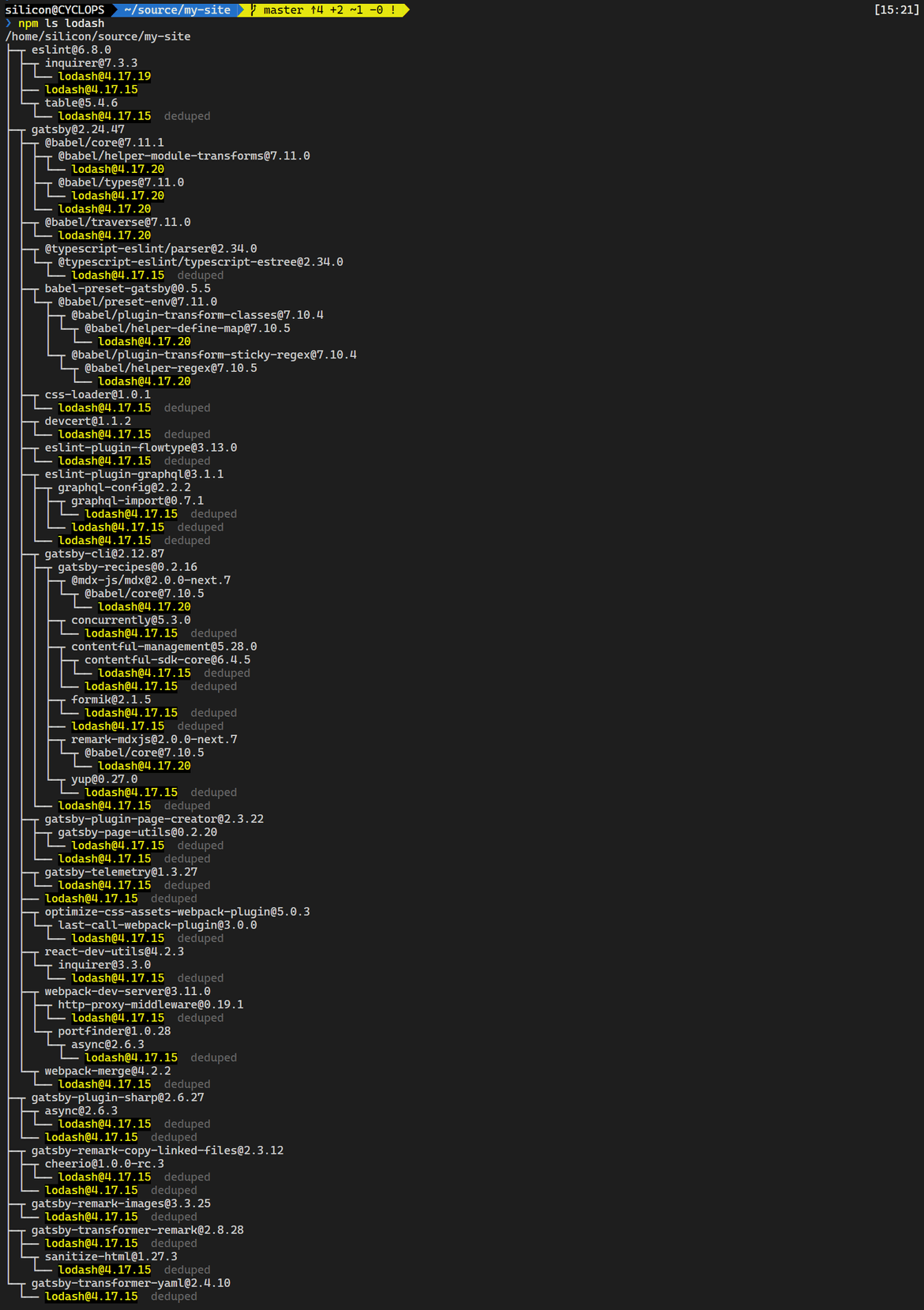

Take the following list of lodash versions.

4.17.194.17.154.17.20

All in all, not too bad. But now look at the dependency graph.

Many packages (mainly build tools where reliability is paramount) pin dependency versions, which is why there is a spread of lodash versions which according to should be compatible with all packages. These packages that pin versions generally raise the pinned version with the next release (often to a schedule), but suppose a hypothetical lodash v5 was released. Depending on the API surface changes, it will take a long time for updates to fully propagate through dependency graphs. Even if v5 were to be highly compatible, it being a major version update means some automated update mechanisms (i.e. Dependabot, subject to the exact configuration) won't present this update, requiring a human to update manually.

A mechanism through which newer versions can be approved for released package versions (perhaps with passing unit tests providing a means of automation) would go a long way to addressing this problem. Such a system could likely also help with detection of accidental semver breaks as well. Maybe one day, maybe one day...

Narrow Version Ranges

Common in dev tooling, this issue tends to make the above even worse. Thankfully this is rarer outside of dev tools, but still more common then it realistically should be.

The JS (and PHP) ecosystem has settled on semver, so don't fight it. Unless a specific project has stated they do not follow this standard, a version range like ^1.2.0 is perfectly fine. If worried about spontaneous breakages, use the lockfile (package-lock.json, pnpm-lock.yaml, and composer.lock) to keep changes from happening without deliberately updating (the files purpose). I will give that npm, yarn, and pnpm are rather lax compared to composer.

Duplicate Packages And Package Versions

I'll skip covering what this is (look to the sections above), this mainly impacts npm and its derivatives. All kinds of weird issues can arise from this, such as breaking instanceof comparisons. Using pnpm helps a lot, but won't necessarily cover all situations.

All in all, this is an annoying feature of the JS ecosystem that replaces the resolving dependency conflicts problem with difficult to debug issues that will absorb an absurd amount of time if unlucky enough to be impacted.

Personal Experiences

These are some noteworthy dependency issues I've encountered in the past. Learning by example is a thing, so maybe these will help someone out there (and double as a reminder for myself should I run into the same issue and not realise).

Inconsistent Runtimes or "The Mystery of the Busted Pipeline"

Locally the project tooling worked fine. On BitBucket Pipelines? An obscure error referring an ) being expected appears.

A few failed debugging attempts later and I notice the pipeline is using different version of PHP to the project (7.2 instead of 7.3). Pipeline gets updated, and everything starts working.

So what was the cause? This Laravel based project's tooling relies on the project source in order to support the creation of custom console commands. A new custom console command had been created for this PR which itself didn't use any PHP 7.3 specific features but did depend on code that does (via dependency injection). As the custom console commands are loaded early in the Laravel boot process, the syntax error was hit before error reporting enhancements were configured resulting in an unhelpful error.

Funnily enough the pipeline wasn't the only thing still configured for an old version. PHPCS with the PHP Compatibility plugin was also targeting PHP 7.2, and reported the cause of the syntax error. Updating the PHP version in the pipeline was a "shot in the dark" to try and fix this, so to have the cause so helpfully reported immediately after was... Exasperating. (╯°□°)╯︵ ┻━┻)

Circular Dependency with Project and Library

- Project depends on library to function.

- Library depends on project to function (hooks into a Laravel service in the project without a fallback).

- Service within project has no references, conclude it must be dead code and so it is removed.

- Everything breaks, dependency on service in library discovered with similar issues in sibling libraries are discovered soon after.

- Code gets restored and plans for future major upgrade updated to reflect dependencies that previously had not been considered. (╯°□°)╯︵ ┻━┻)